Empathy as a natural consequence of learnt reward models

Empathy as a natural consequence of learnt reward models

Empathy as a natural consequence of learnt reward models

Conjecture

Feb 4, 2023

Epistemic Status: Pretty speculative but built on scientific literature. This post builds off my previous post on learnt reward models. Crossposted from my personal blog.

Empathy, the ability to feel another's pain or to 'put yourself in their shoes' is often considered to be a fundamental human cognitive ability, and one that undergirds our social abilities and moral intuitions. As so much of human's success and dominance as a species comes down to our superior social organization, empathy has played a vital role in our history. Whether we can build artificial empathy into AI systems also has clear relevance to AI alignment. If we can create empathic AIs, then it may become easier to make an AI be receptive to human values, even if humans can no longer completely control it. Such an AI seems unlikely to just callously wipe out all humans to make a few more paperclips. Empathy is not a silver bullet however. Although (most) humans have empathy, human history is still in large part a history of us waging war against each other, and there are plenty of examples of humans and other animals perpetuating terrible cruelty on enemies and outgroups.

A reasonable literature has grown up in psychology, cognitive science, and neuroscience studying the neural bases of empathy and its associated cognitive processes. We now know a fair amount about the brain regions involved in empathy, what kind of tasks can reliably elicit it, how individual differences in empathy work, as well as the neuroscience underlying disorders such as psychopathy, autism, and alexithmia which result in impaired empathic processing. However, much of this research does not grapple with the fundamental question of why we possess empathy at all. Typically, it seems to be tacitly assumed that, due to its apparent complexity, empathy must be some special cognitive module which has evolved separately and deliberately due to its fitness benefits. From an evolutionary theory perspective, empathy is often assumed to have evolved because of its adaptive function in promoting reciprocal altruism. The story goes that animals that are altruistic, at least in certain cases, tend to get their altruism reciprocated and may thus tend to out-reproduce other animals that are purely selfish. This would be of especial importance in social species where being able to form coalitions of likeminded and reciprocating individual is key to obtaining power and hence reproductive opportunities. If they could, such coalitions would obviously not include purely selfish animals who never reciprocated any benefits they received from other group members. Nobody wants to be in a coalition with an obviously selfish freerider.

Here, I want to argue a different case. Namely that the basic cognitive phenomenon of empathy -- that of feeling and responding to the emotions of others as if they were your own, is not a special cognitive ability which had to be evolved for its social benefit, but instead is a natural consequence of our (mammalian) cognitive architecture and therefore arises by default. Of course, given this base empathic capability, evolution can expand, develop, and contextualize our natural empathic responses to improve fitness. In many cases, however, evolution actually reduces our native empathic capacity -- for instance, we can contextualize our natural empathy to exclude outgroup members and rivals.

The idea is that empathy fundamentally arises from using learnt reward models to mediate between a low-dimensional set of primary rewards and reinforcers and the high dimensional latent state of an unsupervised world model. In the brain, much of the cortex is thought to be randomly initialized and implements a general purpose unsupervised (or self-supervised) learning algorithm such as predictive coding to build up a general purpose world model of its sensory input. By contrast, the reward signals to the brain are very low dimensional (if not, perhaps, scalar). There is thus a fearsome translation problem that the brain needs to solve: learning to map the high dimensional cortical latent space into a predicted reward value. Due to the high dimensionality of the latent space, we cannot hope to actually experience the reward for every possible state. Instead, we need to learn a reward model that can generalize to unseen states. Possessing such a reward model is crucial both for learning values (i.e. long term expected rewards), predicting future rewards from current state, and performing model based planning where we need the ability to query the reward function at hypothetical imagined states generated during the planning process. We can think of such a reward model as just performing a simple supervised learning task: given a dataset of cortical latent states and realized rewards (given the experience of the agent), predict what the reward will be in some other, non-experienced cortical latent state.

The key idea that leads to empathy is the fact that, if the world model performs a sensible compression of its input data and learns a useful set of natural abstractions, then it is quite likely that the latent codes for the agent performing some action or experiencing some state, and another, similar, agent performing the same action or experiencing the same state, will end up close together in the latent space. If the agent's world model contains natural abstractions for the action, which are invariant to who is performing it, then a large amount of the latent code is likely to be the same between the two cases. If this is the case, then the reward model might 'mis-generalize' to assign reward to another agent performing the action or experiencing the state rather than the agent itself. This should be expected to occur whenever the reward model generalizes smoothly and the latent space codes for the agent and another are very close in the latent space. This is basically 'proto-empathy' since an agent, even if its reward function is purely selfish, can end up assigning reward (positive or negative) to the states of another due to the generalization abilities of the learnt reward function [1].

In neuroscience, discussions of action and state invariance often centre around 'mirror neurons', which are neurons which fire regardless of whether the animal is performing some action or whether it is just watching some other animal performing the same action. But, given an unsupervised world model, mirror neurons are exactly what we should expect to see. They are simply neurons which respond to the abstract action and are invariant to the performer of the action. This kind of invariance is no weirder than translation invariance for objects, and simply is a consequence of the fact that certain actions are 'natural abstractions' and not fundamentally tied with who is performing them [2].

Completely avoiding empathy at the latent space model would require learning an entirely ego-centric world model, such that any action I perform, or any feeling I feel, is represented as a completely different and orthogonal latent state to any other agent performing the same action or experiencing the same feeling. There are good reasons for not naturally learning this kind of entirely ego-centric world model with a complete separation in latent space between concepts involving self and involving others. The primary one is its inefficiency: it requires a duplication of all concepts into a concept-X-as-relates-to-me and concept-X-as-relates-to-others. This would require at best twice as much space to store and twice as much data to be able to learn than a mingled world model where self and other are not completely separated.

This theory of empathy makes some immediate predictions. Firstly, the more 'similar' the agent and its empathic target is, the more likely the latent state codes are to be similar, and hence the more likely reward generalization is, leading to greater empathy. Secondly, empathy is a continuous spectrum, since closeness-in-the-latent-space can vary continuously. This is exactly what we see in humans where large-scale studies find that humans are better at empathising with those closer to them, both within species -- i.e. people empathise more with those they consider in-groups, and across species where the amount of empathy people show a species is closely correlated with its phylogenetic divergence time from us. Thirdly, the degree of empathy depends on both the ability of the reward model to generalize and the world model to produce a latent space which well represents the natural abstractions of its environment. This suggests, perhaps, that empathy is a capability that scales along with model capacity -- larger, more powerful reward and world models may tend to lead to greater, more expansive empathic responses, although they potentially may have reward models that can make finer grained distinctions as well.

Finally, this phenomenon should be fairly fundamental. We should expect it to occur whenever we have a learnt reward model predicting the reward or values of a general unsupervised world-model latent state. This is, and will increasingly be a common setup for when we have agents in environments for which we cannot trivially evaluate the 'true reward function', especially over hypothetical imagined states. Moreover, this is also the cognitive architecture used by mammals and birds which possess a set of subcortical structures which evalaute and dispense rewards, and a general unsupervised world-model implemented in the cortex (or pallium for birds). This is also exactly what we see, with empathic behaviour being apparently commonplace in the animal kingdom.

The mammalian cognitive architecture that results in empathy is actually pretty sensible and it is possible that the natural path to AGIs is with such an architecture. It doesn't apply to classical utility maximizers based on model based planning, such as AIXI, but as soon as you don't have a utility oracle, which can query the utility function in arbitrary states, you are stuck instead with learning a reward function based on a set of 'ground-truth' actually-experienced rewards. Once you start learning a reward function, it is possible that the generalization this produces can result in empathy, even for some 'purely selfish' utility functions. This is potentially quite important for AI alignment. It means that, if we build AGIs with learnt reward functions, and that the latent states in their world model involving humans are quite close to their latent states involving themselves, then it is very possible that they will naturally develop some kind of implicit empathy towards humans. If this happened, it would be quite a positive development from an alignment perspective, since it would mean that the AGI intrisically cares, at least to some degree, about human experiences. The extent to which this occurs would be predicted to depend upon the similarity in the latent space between the AGIs representation of human states and its own. The details of the AGIs world model and training curriculum would likely be very important, as would be the nature of its embodiment. There are reasons to be hopeful about this since the AGI will almost certainly be trained almost entirely on human text data, human-created environments, and be given human relevant goals. This will likely lead to it gaining quite a good understanding of our experiences, which could lead to closeness in the latent space. On the negative side, the phenomomenology and embodiment of the AGI is likely to be very different -- in a distributed datacenter interacting directly with the internet, as opposed to having a physical bipedal body and small, non-copyable brain.

Given reasonable interpretability and control tooling, this line of thought could lead to methods to try to make an AGI more naturally empathic towards humans. This could include carefully designing the architecture or training data of the reward model to lead it to naturally generalize towards human experiences. Alternatively, we may try to directly edit the latent space to as to bring our desired empathic targets to within the range of generalization of the reward models. Finally, during training, by presenting it with a number of 'test stimuli', we should be able to precisely measure the extent and kinds of empathy it has. Similarly, interpretability on the reward model could potentially reveal the expected contours of empathic responses.

Empathy in the brain

Now that we have thought about the general phenomenon and its applications to AI safety, let's turn towards the neuroscience and the specific cognitive architecture that is implemented in mammals and birds. Traditionally, the neuroscience of empathy, splits up our natural conception of 'empathy' into 3 distinct phenomena, each underpinned by a dissociable neural circuit. These three facets are neural resonance, prosocial motivation, and mentalizing/theory of mind. Neural resonance is the visceral 'feeling of somebody else's pain' that we experience during empathy, and it is close to the reward model evaluation of other's states we discuss here. Prosocial motivation is essentially the desire to act on empathic feelings and be altruistic because of them, even if it comes at personal cost. Finally, mentalizing is the ability to 'put oneself in another's shoes' -- i.e. to simulate their inner cognitive processes. These processes are argued to be implemented in dissociable neural circuits. Typically, mentalizing is thought to occur primarily in the high-level association areas of cortex, typically including the precuneus and especially the temporo-parietal junction (TPJ). Neural resonance is thought to occur primarily by utilizing cortical areas involved with sensorimotor and emotional processing such as the anterior cinvulate and insular cortex, as well as the amygdala and subcortically. Finally, prosocial motivation is thought to be implemented in the regions typically related to goal-directed behaviour such as the VTA in the mid-brain and the orbitofrontal and prefrontal cortex. However, in practice, for ecologically realistic stimuli, these processes do not occur in isolation but always tend to co-occur with each other. Furthermore, in general empathy, especially neural resonance also appears to be modality specific. For instance, viewing a conspecific in pain will tend to activate the 'pain matrix': the network of brain regions which are also activated when you yourself are in pain.

Mentalizing, despite being the most 'cognitively advanced' process, is actually the easiest to explain and is not really related to empathy at all. Instead, we should expect mentalizing and theory of mind to just emerge naturally in any unsupervised world-model with sufficient capacity and data. That is, modelling other agents as agents, and explicitly simulating their cognitive state is a natural abstraction which has an extremely high payoff in terms of predictive loss. Intuitively, this is quite obvious. Other agents apart from yourself are a real phenomenon that exists in the world. Moreover, if you can track the mental state of other agents, you can often make many very important predictions about their current and future behaviour which you cannot if you model them as either non-agentic phenomena or alternatively as simple stimulus-response mappings without any internal state.

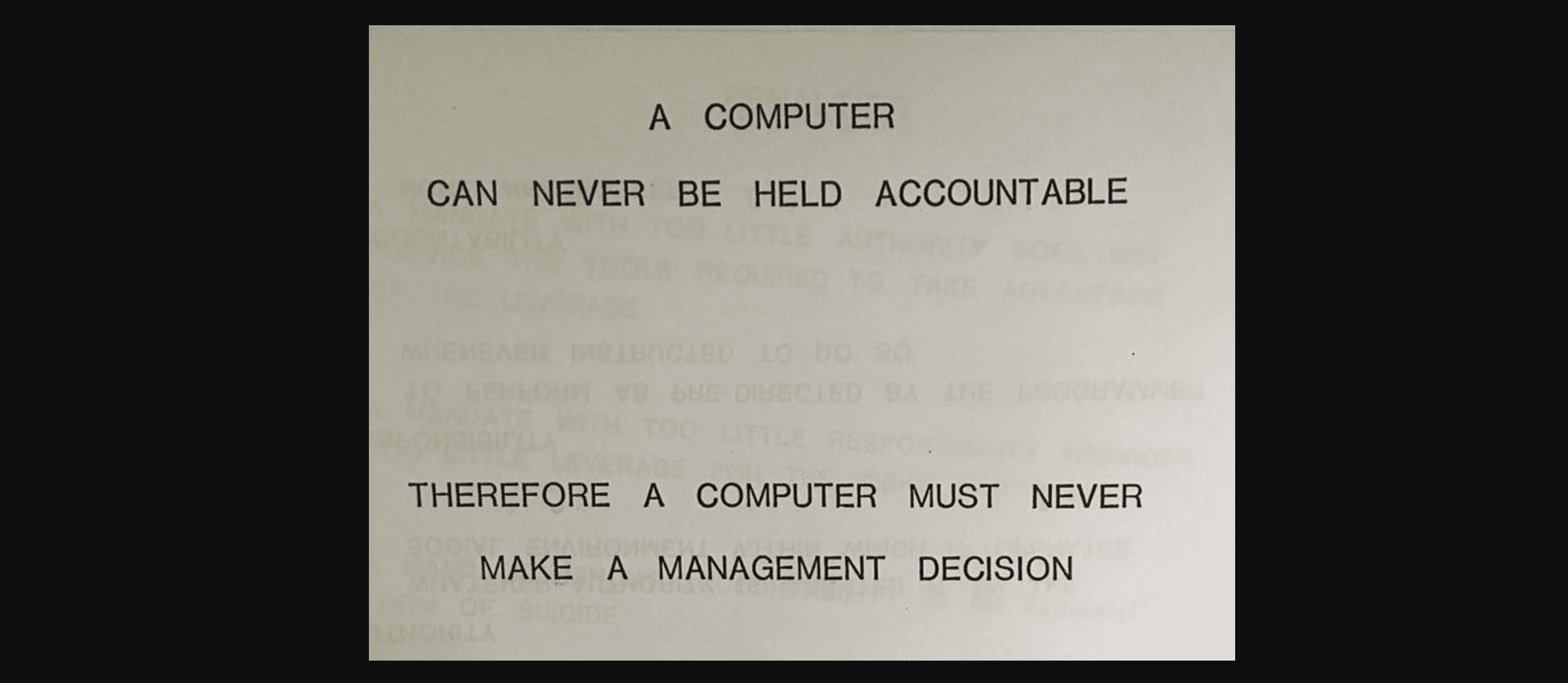

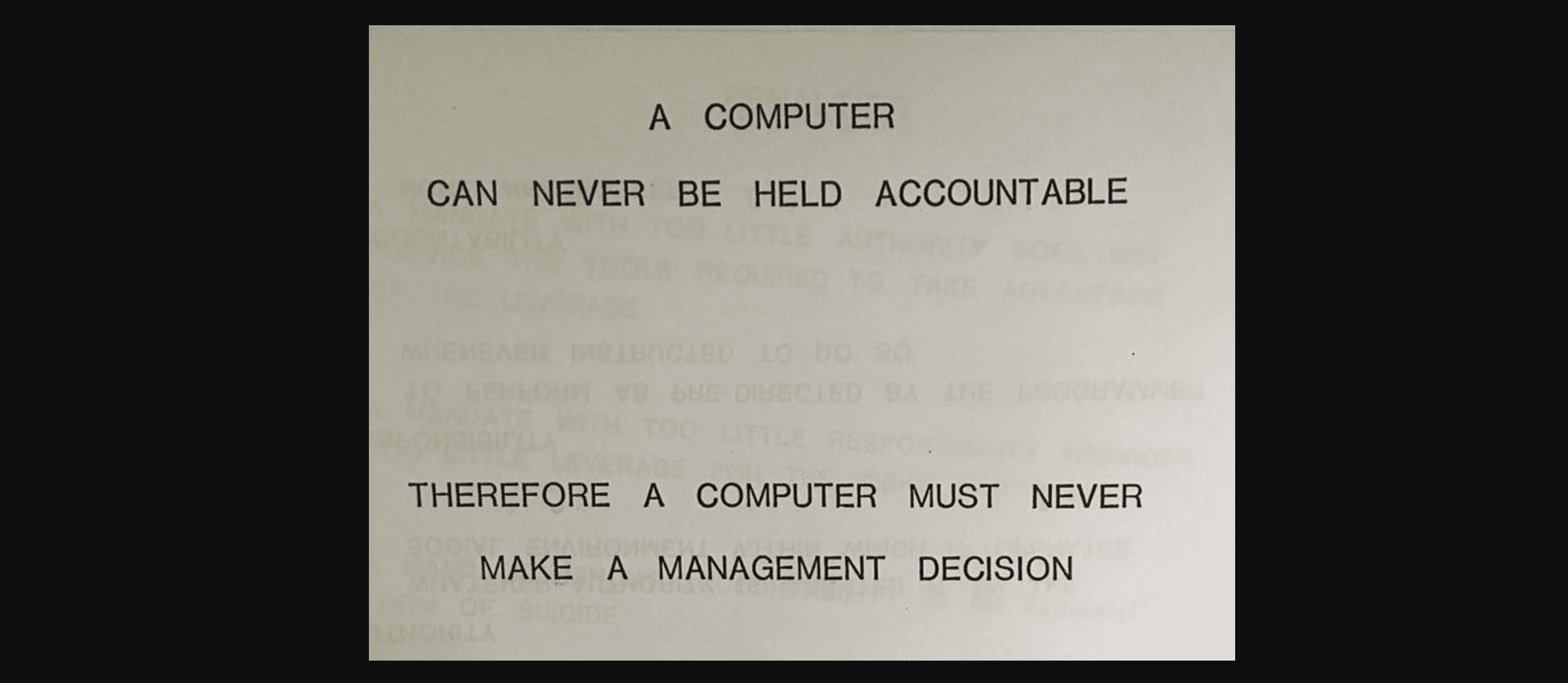

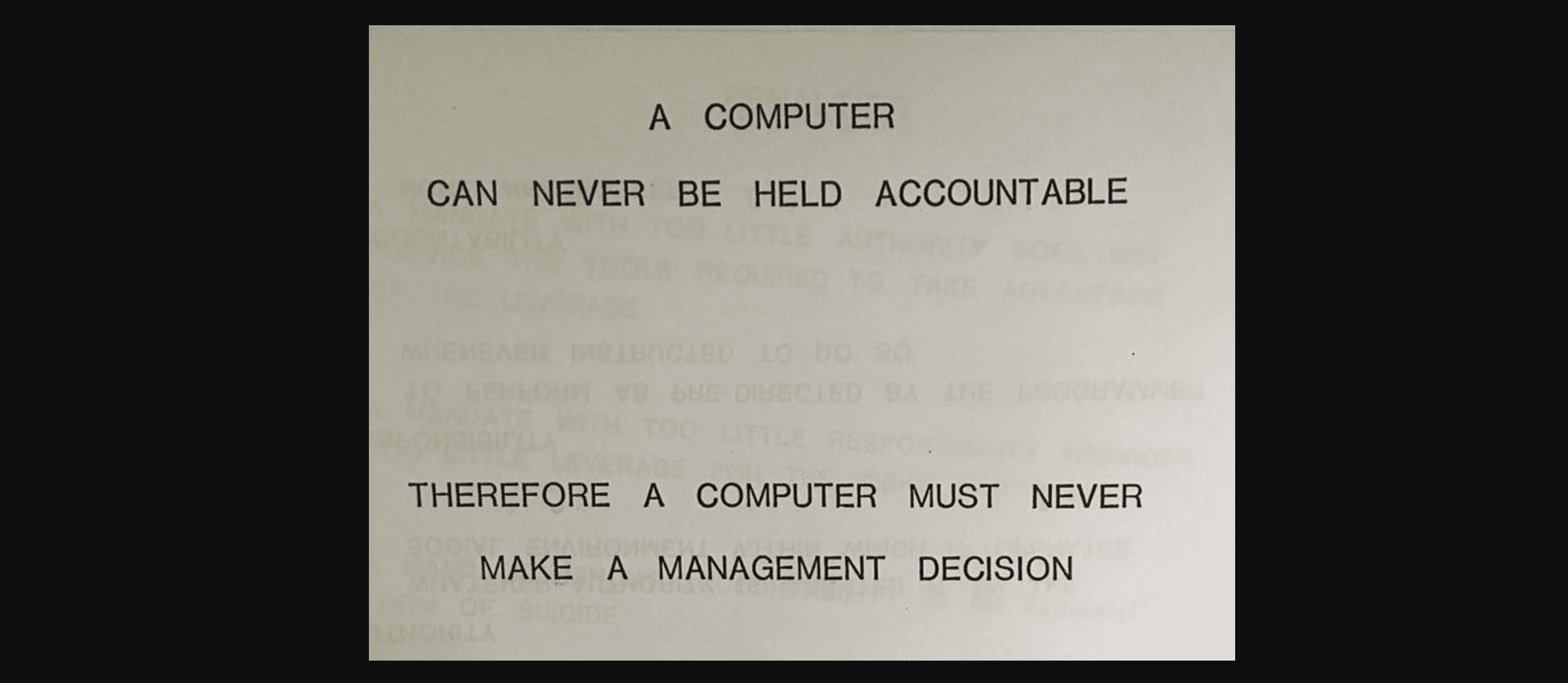

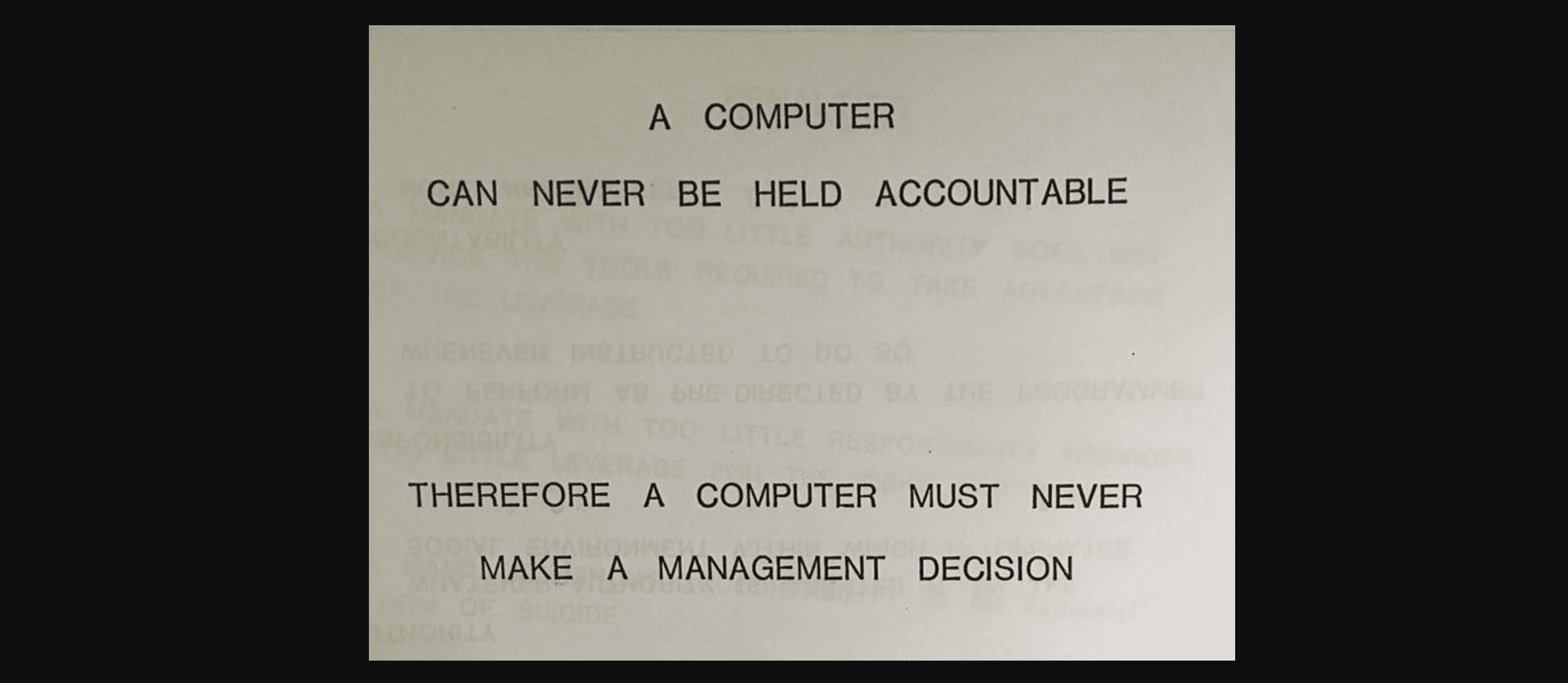

However, because it is expected to arise from any sufficiently powerful unsupervised learning model, mentalizing is completely dissociable from having any motivational component based on empathy. An expected utility maximizer like AIXI should possess a very sophisticated theory of mind and mentalizing capability, but zero empathy. Like the classical depiction of a psychopath, AIXI can perfectly simulate your mental state, but feels nothing if its actions cause you distress. It only simulates you so as to better exploit you to serve its goals. Of course, if we do have motivational empathy, then we have a desire to address and reduce the pain of others, and being able to mentalize is very useful for coming up with effective plans to do that. This is why, I suspect, that mentalizing regions are so co-activated with empathy tasks.

Secondly, there is prosocial motivation. My argument is that this is precisely the kind of reward model generalization presented earlier. Specifically, the brain possesses a reward model learnt in the VTA and basal ganglia based on cortical inputs which predicts the values and rewards expected given certain cortical states. These predicted rewards are then used to train high level cortical controllers to query the unsupervised world model to obtain action plans with high expected rewards. To do so, the brain utilizes a learnt reward model based on associating cortical latent states with previously experienced primary rewards fed through to the VTA. As happened previously, if this reward model misgeneralizes so as to assign reward to a state of other's pain or pleasure, as opposed to our own, then the brain should naturally develop this kind of pro-social motivation, in exactly the same way it develops motivation to reduce its own pain and increase its own pleasure based on the same reward model.

Finally, we come to neural resonance. I would argue this is the physiological oldest and most basic state of empathy and occurs due to a very similar mechanism of model misgeneralization. Only this time, it is not the classic RL-based reward model in VTA that is mis-generalizing, but a separate reflex-association model implemented primarily in the amygdala and related circuitry such as the stria terminalis and periacqueductal grey that is misgeneralizing. In my previous post, I argue that there are two separate behavioural systems in the brain. One reward-based based on RL, and one which predicts brainstem reflexes and other visceral sensations based on supervised predictive learning -- i.e. associate a current state with a future visceral sensation. While the prosocial motivation system is fundamentally based on the RL system, the neural resonance empathy arises from this brainstem-prediction circuit. That is, we have a circuit that is constantly parsing cortical latent states for information predictive of experiencing pain, or needing to flinch, or needing to fight or flee, or any other visceral sensation or decision controlled by the brainstem. When this circuit misgeneralizes, it takes a cortical latent representation of another agent experiencing pain, sees that it contains many 'pain-like' features, and then predicts that the agent itself will experience pain shortly, and thus drives the visceral sensation and compensatory reflexes. This, we argue, is the root of neural resonance.

Widespread empathy in animals

Our theory argues that empathy is a fundamental and basic result of the mammalian cognitive architecture, and hence a clear prediction that results is that essentially all mammals should show some degree of empathy -- primarily in terms of neural resonance and prosocial behaviour. Although the evidence on this is not 100% conclusive for all mammals, almost every animal species studied appears to show at least some degree of empathy towards conspecifics. A large amount of work has been done investigating this in mice and rats. Studies have found that rats which see another rat being given painful electric shocks become more sensitive to pain themselves -- evidence of neural resonance. Similarly it has been shown that rats will not pull a lever which gives them food (a positive reward) if it leads to another rat being given a painful shock. Other species for which there is much evidence of empathy include elephants, dolphins and, of course, apes and monkeys.

Outside of apes and monkeys, dophins and elephants, as well as corvids also appear in anecdotal reports and the scientific literature to have many complex forms of empathy. For instance, both dolphins and elephants appear to take care of and nurse sick or injured individuals, even non-kin, as well as grieve for dead conspecifics. They also appear to be able to use mentalizing behaviours to anticipate the needs of their conspecifics -- for instance bringing them food or supporting them if injured. Apes (as well as corvids and human children) also show all of these empathic behaviours, and also are known to perform 'consolation behaviours' where bystanders will go up to and comfort distressed fellows, especially the loser of a dominance fight. This behaviour can be shown to occur both in captivity, and in the wild.

Contextual modulation of empathy

Overall, while we argue that the basic fundamental forms of empathy effectively arise due to misgeneralization of learnt reward models, and are thus a fundamental feature of this kind of cognitive architecture, this does not mean that our empathic responses are not also sculpted by evolution. Many of our emotions and behaviours take this basic level of empathy and elaborate on it in various ways. Much of our social behaviour and reciprocal altruism is based on a foundation of empathy. Moreover, evolutionarily hardwired behaviours like parenting and social bonding is likely deeply intertwined with our proto-empathic ability. An example of this is the hormone oxytocin which is known to boost our empathic response, as well as being deeply involved both in parental care and social bonding.

On the other hand, evolution may also have given us mechanisms that suppress our natural empathic response rather than accentuate it. Humans, as well as other animals, are easily able to override or inhibit their sense of empathy when it comes to outgroups or rivals of various kinds, exactly as predicted by evolutionary theory. This is also why normal empathetic people are reliably able to sanction or inflict terrible cruelties on others. All of humans, chimpanzees, and rodents are able to modulate their empathic response to be greater for those they are socially close with and less for defectors or rivals (and often becoming negative into schadenfreude). Similarly, an MEG study in humans studying adolescents who grew up in an intractable conflict (the Israel-Palestine conflict), found that both Israeli-Jews and Palestinian-Arabs had an initial spike of empathy towards both ingroup and outgroup stimulus. However, this was followed by a top-down inhibition of empathy towards the outgroup and an increase of empathy towards the in-group. This top-down inhibition of empathy must be cortically-based and learnt-from scratch based on contextual factors (since evolution cannot know a-priori who is ingroup and outgroup). The fact that top-down inhibition of the 'natural' empathy can be learnt is probably also why, empirically, research on genocides have typically found that any kind of mass murder requires a long period of dehumanization of the enemy, to suppress people's natural empathic response to them.

All of this suggests that while the fundamental proto-empathy generated by the reward model generalization is automatic, the response can also be shaped by top-down cortical context. From a machine learning perspective, this means that humans must have some kind of cortical learnt meta-reward model which can edit the reward predictions flexibly based on information and associations coming from the world-model itself.

Psychopaths etc

Another interesting question for this theory is how and why psychopaths, or other empathic disorders exist. If empathy is such a fundamental phenomenon, how do we appear to get impaired empathy in various disorders? Our response to this is that the classic cultural depiction of a psychopath as someone otherwise normal (and often highly functioning) but just lacking in empathy is not really correct. In fact, psychopaths do show other deficits, typically in emotional control, disinhibited behaviour, blunted affect (not really feeling any emotions) and often pathological risk-taking. Neurologically, psychopathy is typically associated with a hypoactive and/or abnormal amygdala, among other deficits, including often also impaired VTA connectivity leading to deficits in decision-making and learning from reinforcement (especially punishments). According to our theory, this would argue that psychopathy is not really a syndrome of lacking empathy, but instead in having abnormal and poor learnt reward models mapping between base reward and visceral reflexes and cortical latent states. Abnormal empathy is then a consequence of the abnormal reward model and its (lack of) generalization ability.

Our theory is very similar to the Perception-Action-Mechanism (PAM), and the very similar 'simulation theory' of empathy. Both argue that empathy occurs because our brain essentially learns to map representations of other's experiencing some state to our own representations for that state. Our contribution is essentially to argue that this isn't some kind of special ability that must be evolved, but rather a natural outcome an an architecture which learns a reward model against an unsupervised latent state.

One prediction of this hypothesis would be that we should expect general unsupervised models, potentially attached to RL agents, to naturally develop all kinds of 'mirror neurons' if trained in a multi-agent environment.

Epistemic Status: Pretty speculative but built on scientific literature. This post builds off my previous post on learnt reward models. Crossposted from my personal blog.

Empathy, the ability to feel another's pain or to 'put yourself in their shoes' is often considered to be a fundamental human cognitive ability, and one that undergirds our social abilities and moral intuitions. As so much of human's success and dominance as a species comes down to our superior social organization, empathy has played a vital role in our history. Whether we can build artificial empathy into AI systems also has clear relevance to AI alignment. If we can create empathic AIs, then it may become easier to make an AI be receptive to human values, even if humans can no longer completely control it. Such an AI seems unlikely to just callously wipe out all humans to make a few more paperclips. Empathy is not a silver bullet however. Although (most) humans have empathy, human history is still in large part a history of us waging war against each other, and there are plenty of examples of humans and other animals perpetuating terrible cruelty on enemies and outgroups.

A reasonable literature has grown up in psychology, cognitive science, and neuroscience studying the neural bases of empathy and its associated cognitive processes. We now know a fair amount about the brain regions involved in empathy, what kind of tasks can reliably elicit it, how individual differences in empathy work, as well as the neuroscience underlying disorders such as psychopathy, autism, and alexithmia which result in impaired empathic processing. However, much of this research does not grapple with the fundamental question of why we possess empathy at all. Typically, it seems to be tacitly assumed that, due to its apparent complexity, empathy must be some special cognitive module which has evolved separately and deliberately due to its fitness benefits. From an evolutionary theory perspective, empathy is often assumed to have evolved because of its adaptive function in promoting reciprocal altruism. The story goes that animals that are altruistic, at least in certain cases, tend to get their altruism reciprocated and may thus tend to out-reproduce other animals that are purely selfish. This would be of especial importance in social species where being able to form coalitions of likeminded and reciprocating individual is key to obtaining power and hence reproductive opportunities. If they could, such coalitions would obviously not include purely selfish animals who never reciprocated any benefits they received from other group members. Nobody wants to be in a coalition with an obviously selfish freerider.

Here, I want to argue a different case. Namely that the basic cognitive phenomenon of empathy -- that of feeling and responding to the emotions of others as if they were your own, is not a special cognitive ability which had to be evolved for its social benefit, but instead is a natural consequence of our (mammalian) cognitive architecture and therefore arises by default. Of course, given this base empathic capability, evolution can expand, develop, and contextualize our natural empathic responses to improve fitness. In many cases, however, evolution actually reduces our native empathic capacity -- for instance, we can contextualize our natural empathy to exclude outgroup members and rivals.

The idea is that empathy fundamentally arises from using learnt reward models to mediate between a low-dimensional set of primary rewards and reinforcers and the high dimensional latent state of an unsupervised world model. In the brain, much of the cortex is thought to be randomly initialized and implements a general purpose unsupervised (or self-supervised) learning algorithm such as predictive coding to build up a general purpose world model of its sensory input. By contrast, the reward signals to the brain are very low dimensional (if not, perhaps, scalar). There is thus a fearsome translation problem that the brain needs to solve: learning to map the high dimensional cortical latent space into a predicted reward value. Due to the high dimensionality of the latent space, we cannot hope to actually experience the reward for every possible state. Instead, we need to learn a reward model that can generalize to unseen states. Possessing such a reward model is crucial both for learning values (i.e. long term expected rewards), predicting future rewards from current state, and performing model based planning where we need the ability to query the reward function at hypothetical imagined states generated during the planning process. We can think of such a reward model as just performing a simple supervised learning task: given a dataset of cortical latent states and realized rewards (given the experience of the agent), predict what the reward will be in some other, non-experienced cortical latent state.

The key idea that leads to empathy is the fact that, if the world model performs a sensible compression of its input data and learns a useful set of natural abstractions, then it is quite likely that the latent codes for the agent performing some action or experiencing some state, and another, similar, agent performing the same action or experiencing the same state, will end up close together in the latent space. If the agent's world model contains natural abstractions for the action, which are invariant to who is performing it, then a large amount of the latent code is likely to be the same between the two cases. If this is the case, then the reward model might 'mis-generalize' to assign reward to another agent performing the action or experiencing the state rather than the agent itself. This should be expected to occur whenever the reward model generalizes smoothly and the latent space codes for the agent and another are very close in the latent space. This is basically 'proto-empathy' since an agent, even if its reward function is purely selfish, can end up assigning reward (positive or negative) to the states of another due to the generalization abilities of the learnt reward function [1].

In neuroscience, discussions of action and state invariance often centre around 'mirror neurons', which are neurons which fire regardless of whether the animal is performing some action or whether it is just watching some other animal performing the same action. But, given an unsupervised world model, mirror neurons are exactly what we should expect to see. They are simply neurons which respond to the abstract action and are invariant to the performer of the action. This kind of invariance is no weirder than translation invariance for objects, and simply is a consequence of the fact that certain actions are 'natural abstractions' and not fundamentally tied with who is performing them [2].

Completely avoiding empathy at the latent space model would require learning an entirely ego-centric world model, such that any action I perform, or any feeling I feel, is represented as a completely different and orthogonal latent state to any other agent performing the same action or experiencing the same feeling. There are good reasons for not naturally learning this kind of entirely ego-centric world model with a complete separation in latent space between concepts involving self and involving others. The primary one is its inefficiency: it requires a duplication of all concepts into a concept-X-as-relates-to-me and concept-X-as-relates-to-others. This would require at best twice as much space to store and twice as much data to be able to learn than a mingled world model where self and other are not completely separated.

This theory of empathy makes some immediate predictions. Firstly, the more 'similar' the agent and its empathic target is, the more likely the latent state codes are to be similar, and hence the more likely reward generalization is, leading to greater empathy. Secondly, empathy is a continuous spectrum, since closeness-in-the-latent-space can vary continuously. This is exactly what we see in humans where large-scale studies find that humans are better at empathising with those closer to them, both within species -- i.e. people empathise more with those they consider in-groups, and across species where the amount of empathy people show a species is closely correlated with its phylogenetic divergence time from us. Thirdly, the degree of empathy depends on both the ability of the reward model to generalize and the world model to produce a latent space which well represents the natural abstractions of its environment. This suggests, perhaps, that empathy is a capability that scales along with model capacity -- larger, more powerful reward and world models may tend to lead to greater, more expansive empathic responses, although they potentially may have reward models that can make finer grained distinctions as well.

Finally, this phenomenon should be fairly fundamental. We should expect it to occur whenever we have a learnt reward model predicting the reward or values of a general unsupervised world-model latent state. This is, and will increasingly be a common setup for when we have agents in environments for which we cannot trivially evaluate the 'true reward function', especially over hypothetical imagined states. Moreover, this is also the cognitive architecture used by mammals and birds which possess a set of subcortical structures which evalaute and dispense rewards, and a general unsupervised world-model implemented in the cortex (or pallium for birds). This is also exactly what we see, with empathic behaviour being apparently commonplace in the animal kingdom.

The mammalian cognitive architecture that results in empathy is actually pretty sensible and it is possible that the natural path to AGIs is with such an architecture. It doesn't apply to classical utility maximizers based on model based planning, such as AIXI, but as soon as you don't have a utility oracle, which can query the utility function in arbitrary states, you are stuck instead with learning a reward function based on a set of 'ground-truth' actually-experienced rewards. Once you start learning a reward function, it is possible that the generalization this produces can result in empathy, even for some 'purely selfish' utility functions. This is potentially quite important for AI alignment. It means that, if we build AGIs with learnt reward functions, and that the latent states in their world model involving humans are quite close to their latent states involving themselves, then it is very possible that they will naturally develop some kind of implicit empathy towards humans. If this happened, it would be quite a positive development from an alignment perspective, since it would mean that the AGI intrisically cares, at least to some degree, about human experiences. The extent to which this occurs would be predicted to depend upon the similarity in the latent space between the AGIs representation of human states and its own. The details of the AGIs world model and training curriculum would likely be very important, as would be the nature of its embodiment. There are reasons to be hopeful about this since the AGI will almost certainly be trained almost entirely on human text data, human-created environments, and be given human relevant goals. This will likely lead to it gaining quite a good understanding of our experiences, which could lead to closeness in the latent space. On the negative side, the phenomomenology and embodiment of the AGI is likely to be very different -- in a distributed datacenter interacting directly with the internet, as opposed to having a physical bipedal body and small, non-copyable brain.

Given reasonable interpretability and control tooling, this line of thought could lead to methods to try to make an AGI more naturally empathic towards humans. This could include carefully designing the architecture or training data of the reward model to lead it to naturally generalize towards human experiences. Alternatively, we may try to directly edit the latent space to as to bring our desired empathic targets to within the range of generalization of the reward models. Finally, during training, by presenting it with a number of 'test stimuli', we should be able to precisely measure the extent and kinds of empathy it has. Similarly, interpretability on the reward model could potentially reveal the expected contours of empathic responses.

Empathy in the brain

Now that we have thought about the general phenomenon and its applications to AI safety, let's turn towards the neuroscience and the specific cognitive architecture that is implemented in mammals and birds. Traditionally, the neuroscience of empathy, splits up our natural conception of 'empathy' into 3 distinct phenomena, each underpinned by a dissociable neural circuit. These three facets are neural resonance, prosocial motivation, and mentalizing/theory of mind. Neural resonance is the visceral 'feeling of somebody else's pain' that we experience during empathy, and it is close to the reward model evaluation of other's states we discuss here. Prosocial motivation is essentially the desire to act on empathic feelings and be altruistic because of them, even if it comes at personal cost. Finally, mentalizing is the ability to 'put oneself in another's shoes' -- i.e. to simulate their inner cognitive processes. These processes are argued to be implemented in dissociable neural circuits. Typically, mentalizing is thought to occur primarily in the high-level association areas of cortex, typically including the precuneus and especially the temporo-parietal junction (TPJ). Neural resonance is thought to occur primarily by utilizing cortical areas involved with sensorimotor and emotional processing such as the anterior cinvulate and insular cortex, as well as the amygdala and subcortically. Finally, prosocial motivation is thought to be implemented in the regions typically related to goal-directed behaviour such as the VTA in the mid-brain and the orbitofrontal and prefrontal cortex. However, in practice, for ecologically realistic stimuli, these processes do not occur in isolation but always tend to co-occur with each other. Furthermore, in general empathy, especially neural resonance also appears to be modality specific. For instance, viewing a conspecific in pain will tend to activate the 'pain matrix': the network of brain regions which are also activated when you yourself are in pain.

Mentalizing, despite being the most 'cognitively advanced' process, is actually the easiest to explain and is not really related to empathy at all. Instead, we should expect mentalizing and theory of mind to just emerge naturally in any unsupervised world-model with sufficient capacity and data. That is, modelling other agents as agents, and explicitly simulating their cognitive state is a natural abstraction which has an extremely high payoff in terms of predictive loss. Intuitively, this is quite obvious. Other agents apart from yourself are a real phenomenon that exists in the world. Moreover, if you can track the mental state of other agents, you can often make many very important predictions about their current and future behaviour which you cannot if you model them as either non-agentic phenomena or alternatively as simple stimulus-response mappings without any internal state.

However, because it is expected to arise from any sufficiently powerful unsupervised learning model, mentalizing is completely dissociable from having any motivational component based on empathy. An expected utility maximizer like AIXI should possess a very sophisticated theory of mind and mentalizing capability, but zero empathy. Like the classical depiction of a psychopath, AIXI can perfectly simulate your mental state, but feels nothing if its actions cause you distress. It only simulates you so as to better exploit you to serve its goals. Of course, if we do have motivational empathy, then we have a desire to address and reduce the pain of others, and being able to mentalize is very useful for coming up with effective plans to do that. This is why, I suspect, that mentalizing regions are so co-activated with empathy tasks.

Secondly, there is prosocial motivation. My argument is that this is precisely the kind of reward model generalization presented earlier. Specifically, the brain possesses a reward model learnt in the VTA and basal ganglia based on cortical inputs which predicts the values and rewards expected given certain cortical states. These predicted rewards are then used to train high level cortical controllers to query the unsupervised world model to obtain action plans with high expected rewards. To do so, the brain utilizes a learnt reward model based on associating cortical latent states with previously experienced primary rewards fed through to the VTA. As happened previously, if this reward model misgeneralizes so as to assign reward to a state of other's pain or pleasure, as opposed to our own, then the brain should naturally develop this kind of pro-social motivation, in exactly the same way it develops motivation to reduce its own pain and increase its own pleasure based on the same reward model.

Finally, we come to neural resonance. I would argue this is the physiological oldest and most basic state of empathy and occurs due to a very similar mechanism of model misgeneralization. Only this time, it is not the classic RL-based reward model in VTA that is mis-generalizing, but a separate reflex-association model implemented primarily in the amygdala and related circuitry such as the stria terminalis and periacqueductal grey that is misgeneralizing. In my previous post, I argue that there are two separate behavioural systems in the brain. One reward-based based on RL, and one which predicts brainstem reflexes and other visceral sensations based on supervised predictive learning -- i.e. associate a current state with a future visceral sensation. While the prosocial motivation system is fundamentally based on the RL system, the neural resonance empathy arises from this brainstem-prediction circuit. That is, we have a circuit that is constantly parsing cortical latent states for information predictive of experiencing pain, or needing to flinch, or needing to fight or flee, or any other visceral sensation or decision controlled by the brainstem. When this circuit misgeneralizes, it takes a cortical latent representation of another agent experiencing pain, sees that it contains many 'pain-like' features, and then predicts that the agent itself will experience pain shortly, and thus drives the visceral sensation and compensatory reflexes. This, we argue, is the root of neural resonance.

Widespread empathy in animals

Our theory argues that empathy is a fundamental and basic result of the mammalian cognitive architecture, and hence a clear prediction that results is that essentially all mammals should show some degree of empathy -- primarily in terms of neural resonance and prosocial behaviour. Although the evidence on this is not 100% conclusive for all mammals, almost every animal species studied appears to show at least some degree of empathy towards conspecifics. A large amount of work has been done investigating this in mice and rats. Studies have found that rats which see another rat being given painful electric shocks become more sensitive to pain themselves -- evidence of neural resonance. Similarly it has been shown that rats will not pull a lever which gives them food (a positive reward) if it leads to another rat being given a painful shock. Other species for which there is much evidence of empathy include elephants, dolphins and, of course, apes and monkeys.

Outside of apes and monkeys, dophins and elephants, as well as corvids also appear in anecdotal reports and the scientific literature to have many complex forms of empathy. For instance, both dolphins and elephants appear to take care of and nurse sick or injured individuals, even non-kin, as well as grieve for dead conspecifics. They also appear to be able to use mentalizing behaviours to anticipate the needs of their conspecifics -- for instance bringing them food or supporting them if injured. Apes (as well as corvids and human children) also show all of these empathic behaviours, and also are known to perform 'consolation behaviours' where bystanders will go up to and comfort distressed fellows, especially the loser of a dominance fight. This behaviour can be shown to occur both in captivity, and in the wild.

Contextual modulation of empathy

Overall, while we argue that the basic fundamental forms of empathy effectively arise due to misgeneralization of learnt reward models, and are thus a fundamental feature of this kind of cognitive architecture, this does not mean that our empathic responses are not also sculpted by evolution. Many of our emotions and behaviours take this basic level of empathy and elaborate on it in various ways. Much of our social behaviour and reciprocal altruism is based on a foundation of empathy. Moreover, evolutionarily hardwired behaviours like parenting and social bonding is likely deeply intertwined with our proto-empathic ability. An example of this is the hormone oxytocin which is known to boost our empathic response, as well as being deeply involved both in parental care and social bonding.

On the other hand, evolution may also have given us mechanisms that suppress our natural empathic response rather than accentuate it. Humans, as well as other animals, are easily able to override or inhibit their sense of empathy when it comes to outgroups or rivals of various kinds, exactly as predicted by evolutionary theory. This is also why normal empathetic people are reliably able to sanction or inflict terrible cruelties on others. All of humans, chimpanzees, and rodents are able to modulate their empathic response to be greater for those they are socially close with and less for defectors or rivals (and often becoming negative into schadenfreude). Similarly, an MEG study in humans studying adolescents who grew up in an intractable conflict (the Israel-Palestine conflict), found that both Israeli-Jews and Palestinian-Arabs had an initial spike of empathy towards both ingroup and outgroup stimulus. However, this was followed by a top-down inhibition of empathy towards the outgroup and an increase of empathy towards the in-group. This top-down inhibition of empathy must be cortically-based and learnt-from scratch based on contextual factors (since evolution cannot know a-priori who is ingroup and outgroup). The fact that top-down inhibition of the 'natural' empathy can be learnt is probably also why, empirically, research on genocides have typically found that any kind of mass murder requires a long period of dehumanization of the enemy, to suppress people's natural empathic response to them.

All of this suggests that while the fundamental proto-empathy generated by the reward model generalization is automatic, the response can also be shaped by top-down cortical context. From a machine learning perspective, this means that humans must have some kind of cortical learnt meta-reward model which can edit the reward predictions flexibly based on information and associations coming from the world-model itself.

Psychopaths etc

Another interesting question for this theory is how and why psychopaths, or other empathic disorders exist. If empathy is such a fundamental phenomenon, how do we appear to get impaired empathy in various disorders? Our response to this is that the classic cultural depiction of a psychopath as someone otherwise normal (and often highly functioning) but just lacking in empathy is not really correct. In fact, psychopaths do show other deficits, typically in emotional control, disinhibited behaviour, blunted affect (not really feeling any emotions) and often pathological risk-taking. Neurologically, psychopathy is typically associated with a hypoactive and/or abnormal amygdala, among other deficits, including often also impaired VTA connectivity leading to deficits in decision-making and learning from reinforcement (especially punishments). According to our theory, this would argue that psychopathy is not really a syndrome of lacking empathy, but instead in having abnormal and poor learnt reward models mapping between base reward and visceral reflexes and cortical latent states. Abnormal empathy is then a consequence of the abnormal reward model and its (lack of) generalization ability.

Our theory is very similar to the Perception-Action-Mechanism (PAM), and the very similar 'simulation theory' of empathy. Both argue that empathy occurs because our brain essentially learns to map representations of other's experiencing some state to our own representations for that state. Our contribution is essentially to argue that this isn't some kind of special ability that must be evolved, but rather a natural outcome an an architecture which learns a reward model against an unsupervised latent state.

One prediction of this hypothesis would be that we should expect general unsupervised models, potentially attached to RL agents, to naturally develop all kinds of 'mirror neurons' if trained in a multi-agent environment.

Epistemic Status: Pretty speculative but built on scientific literature. This post builds off my previous post on learnt reward models. Crossposted from my personal blog.

Empathy, the ability to feel another's pain or to 'put yourself in their shoes' is often considered to be a fundamental human cognitive ability, and one that undergirds our social abilities and moral intuitions. As so much of human's success and dominance as a species comes down to our superior social organization, empathy has played a vital role in our history. Whether we can build artificial empathy into AI systems also has clear relevance to AI alignment. If we can create empathic AIs, then it may become easier to make an AI be receptive to human values, even if humans can no longer completely control it. Such an AI seems unlikely to just callously wipe out all humans to make a few more paperclips. Empathy is not a silver bullet however. Although (most) humans have empathy, human history is still in large part a history of us waging war against each other, and there are plenty of examples of humans and other animals perpetuating terrible cruelty on enemies and outgroups.

A reasonable literature has grown up in psychology, cognitive science, and neuroscience studying the neural bases of empathy and its associated cognitive processes. We now know a fair amount about the brain regions involved in empathy, what kind of tasks can reliably elicit it, how individual differences in empathy work, as well as the neuroscience underlying disorders such as psychopathy, autism, and alexithmia which result in impaired empathic processing. However, much of this research does not grapple with the fundamental question of why we possess empathy at all. Typically, it seems to be tacitly assumed that, due to its apparent complexity, empathy must be some special cognitive module which has evolved separately and deliberately due to its fitness benefits. From an evolutionary theory perspective, empathy is often assumed to have evolved because of its adaptive function in promoting reciprocal altruism. The story goes that animals that are altruistic, at least in certain cases, tend to get their altruism reciprocated and may thus tend to out-reproduce other animals that are purely selfish. This would be of especial importance in social species where being able to form coalitions of likeminded and reciprocating individual is key to obtaining power and hence reproductive opportunities. If they could, such coalitions would obviously not include purely selfish animals who never reciprocated any benefits they received from other group members. Nobody wants to be in a coalition with an obviously selfish freerider.

Here, I want to argue a different case. Namely that the basic cognitive phenomenon of empathy -- that of feeling and responding to the emotions of others as if they were your own, is not a special cognitive ability which had to be evolved for its social benefit, but instead is a natural consequence of our (mammalian) cognitive architecture and therefore arises by default. Of course, given this base empathic capability, evolution can expand, develop, and contextualize our natural empathic responses to improve fitness. In many cases, however, evolution actually reduces our native empathic capacity -- for instance, we can contextualize our natural empathy to exclude outgroup members and rivals.

The idea is that empathy fundamentally arises from using learnt reward models to mediate between a low-dimensional set of primary rewards and reinforcers and the high dimensional latent state of an unsupervised world model. In the brain, much of the cortex is thought to be randomly initialized and implements a general purpose unsupervised (or self-supervised) learning algorithm such as predictive coding to build up a general purpose world model of its sensory input. By contrast, the reward signals to the brain are very low dimensional (if not, perhaps, scalar). There is thus a fearsome translation problem that the brain needs to solve: learning to map the high dimensional cortical latent space into a predicted reward value. Due to the high dimensionality of the latent space, we cannot hope to actually experience the reward for every possible state. Instead, we need to learn a reward model that can generalize to unseen states. Possessing such a reward model is crucial both for learning values (i.e. long term expected rewards), predicting future rewards from current state, and performing model based planning where we need the ability to query the reward function at hypothetical imagined states generated during the planning process. We can think of such a reward model as just performing a simple supervised learning task: given a dataset of cortical latent states and realized rewards (given the experience of the agent), predict what the reward will be in some other, non-experienced cortical latent state.

The key idea that leads to empathy is the fact that, if the world model performs a sensible compression of its input data and learns a useful set of natural abstractions, then it is quite likely that the latent codes for the agent performing some action or experiencing some state, and another, similar, agent performing the same action or experiencing the same state, will end up close together in the latent space. If the agent's world model contains natural abstractions for the action, which are invariant to who is performing it, then a large amount of the latent code is likely to be the same between the two cases. If this is the case, then the reward model might 'mis-generalize' to assign reward to another agent performing the action or experiencing the state rather than the agent itself. This should be expected to occur whenever the reward model generalizes smoothly and the latent space codes for the agent and another are very close in the latent space. This is basically 'proto-empathy' since an agent, even if its reward function is purely selfish, can end up assigning reward (positive or negative) to the states of another due to the generalization abilities of the learnt reward function [1].

In neuroscience, discussions of action and state invariance often centre around 'mirror neurons', which are neurons which fire regardless of whether the animal is performing some action or whether it is just watching some other animal performing the same action. But, given an unsupervised world model, mirror neurons are exactly what we should expect to see. They are simply neurons which respond to the abstract action and are invariant to the performer of the action. This kind of invariance is no weirder than translation invariance for objects, and simply is a consequence of the fact that certain actions are 'natural abstractions' and not fundamentally tied with who is performing them [2].

Completely avoiding empathy at the latent space model would require learning an entirely ego-centric world model, such that any action I perform, or any feeling I feel, is represented as a completely different and orthogonal latent state to any other agent performing the same action or experiencing the same feeling. There are good reasons for not naturally learning this kind of entirely ego-centric world model with a complete separation in latent space between concepts involving self and involving others. The primary one is its inefficiency: it requires a duplication of all concepts into a concept-X-as-relates-to-me and concept-X-as-relates-to-others. This would require at best twice as much space to store and twice as much data to be able to learn than a mingled world model where self and other are not completely separated.

This theory of empathy makes some immediate predictions. Firstly, the more 'similar' the agent and its empathic target is, the more likely the latent state codes are to be similar, and hence the more likely reward generalization is, leading to greater empathy. Secondly, empathy is a continuous spectrum, since closeness-in-the-latent-space can vary continuously. This is exactly what we see in humans where large-scale studies find that humans are better at empathising with those closer to them, both within species -- i.e. people empathise more with those they consider in-groups, and across species where the amount of empathy people show a species is closely correlated with its phylogenetic divergence time from us. Thirdly, the degree of empathy depends on both the ability of the reward model to generalize and the world model to produce a latent space which well represents the natural abstractions of its environment. This suggests, perhaps, that empathy is a capability that scales along with model capacity -- larger, more powerful reward and world models may tend to lead to greater, more expansive empathic responses, although they potentially may have reward models that can make finer grained distinctions as well.

Finally, this phenomenon should be fairly fundamental. We should expect it to occur whenever we have a learnt reward model predicting the reward or values of a general unsupervised world-model latent state. This is, and will increasingly be a common setup for when we have agents in environments for which we cannot trivially evaluate the 'true reward function', especially over hypothetical imagined states. Moreover, this is also the cognitive architecture used by mammals and birds which possess a set of subcortical structures which evalaute and dispense rewards, and a general unsupervised world-model implemented in the cortex (or pallium for birds). This is also exactly what we see, with empathic behaviour being apparently commonplace in the animal kingdom.

The mammalian cognitive architecture that results in empathy is actually pretty sensible and it is possible that the natural path to AGIs is with such an architecture. It doesn't apply to classical utility maximizers based on model based planning, such as AIXI, but as soon as you don't have a utility oracle, which can query the utility function in arbitrary states, you are stuck instead with learning a reward function based on a set of 'ground-truth' actually-experienced rewards. Once you start learning a reward function, it is possible that the generalization this produces can result in empathy, even for some 'purely selfish' utility functions. This is potentially quite important for AI alignment. It means that, if we build AGIs with learnt reward functions, and that the latent states in their world model involving humans are quite close to their latent states involving themselves, then it is very possible that they will naturally develop some kind of implicit empathy towards humans. If this happened, it would be quite a positive development from an alignment perspective, since it would mean that the AGI intrisically cares, at least to some degree, about human experiences. The extent to which this occurs would be predicted to depend upon the similarity in the latent space between the AGIs representation of human states and its own. The details of the AGIs world model and training curriculum would likely be very important, as would be the nature of its embodiment. There are reasons to be hopeful about this since the AGI will almost certainly be trained almost entirely on human text data, human-created environments, and be given human relevant goals. This will likely lead to it gaining quite a good understanding of our experiences, which could lead to closeness in the latent space. On the negative side, the phenomomenology and embodiment of the AGI is likely to be very different -- in a distributed datacenter interacting directly with the internet, as opposed to having a physical bipedal body and small, non-copyable brain.

Given reasonable interpretability and control tooling, this line of thought could lead to methods to try to make an AGI more naturally empathic towards humans. This could include carefully designing the architecture or training data of the reward model to lead it to naturally generalize towards human experiences. Alternatively, we may try to directly edit the latent space to as to bring our desired empathic targets to within the range of generalization of the reward models. Finally, during training, by presenting it with a number of 'test stimuli', we should be able to precisely measure the extent and kinds of empathy it has. Similarly, interpretability on the reward model could potentially reveal the expected contours of empathic responses.

Empathy in the brain

Now that we have thought about the general phenomenon and its applications to AI safety, let's turn towards the neuroscience and the specific cognitive architecture that is implemented in mammals and birds. Traditionally, the neuroscience of empathy, splits up our natural conception of 'empathy' into 3 distinct phenomena, each underpinned by a dissociable neural circuit. These three facets are neural resonance, prosocial motivation, and mentalizing/theory of mind. Neural resonance is the visceral 'feeling of somebody else's pain' that we experience during empathy, and it is close to the reward model evaluation of other's states we discuss here. Prosocial motivation is essentially the desire to act on empathic feelings and be altruistic because of them, even if it comes at personal cost. Finally, mentalizing is the ability to 'put oneself in another's shoes' -- i.e. to simulate their inner cognitive processes. These processes are argued to be implemented in dissociable neural circuits. Typically, mentalizing is thought to occur primarily in the high-level association areas of cortex, typically including the precuneus and especially the temporo-parietal junction (TPJ). Neural resonance is thought to occur primarily by utilizing cortical areas involved with sensorimotor and emotional processing such as the anterior cinvulate and insular cortex, as well as the amygdala and subcortically. Finally, prosocial motivation is thought to be implemented in the regions typically related to goal-directed behaviour such as the VTA in the mid-brain and the orbitofrontal and prefrontal cortex. However, in practice, for ecologically realistic stimuli, these processes do not occur in isolation but always tend to co-occur with each other. Furthermore, in general empathy, especially neural resonance also appears to be modality specific. For instance, viewing a conspecific in pain will tend to activate the 'pain matrix': the network of brain regions which are also activated when you yourself are in pain.

Mentalizing, despite being the most 'cognitively advanced' process, is actually the easiest to explain and is not really related to empathy at all. Instead, we should expect mentalizing and theory of mind to just emerge naturally in any unsupervised world-model with sufficient capacity and data. That is, modelling other agents as agents, and explicitly simulating their cognitive state is a natural abstraction which has an extremely high payoff in terms of predictive loss. Intuitively, this is quite obvious. Other agents apart from yourself are a real phenomenon that exists in the world. Moreover, if you can track the mental state of other agents, you can often make many very important predictions about their current and future behaviour which you cannot if you model them as either non-agentic phenomena or alternatively as simple stimulus-response mappings without any internal state.

However, because it is expected to arise from any sufficiently powerful unsupervised learning model, mentalizing is completely dissociable from having any motivational component based on empathy. An expected utility maximizer like AIXI should possess a very sophisticated theory of mind and mentalizing capability, but zero empathy. Like the classical depiction of a psychopath, AIXI can perfectly simulate your mental state, but feels nothing if its actions cause you distress. It only simulates you so as to better exploit you to serve its goals. Of course, if we do have motivational empathy, then we have a desire to address and reduce the pain of others, and being able to mentalize is very useful for coming up with effective plans to do that. This is why, I suspect, that mentalizing regions are so co-activated with empathy tasks.

Secondly, there is prosocial motivation. My argument is that this is precisely the kind of reward model generalization presented earlier. Specifically, the brain possesses a reward model learnt in the VTA and basal ganglia based on cortical inputs which predicts the values and rewards expected given certain cortical states. These predicted rewards are then used to train high level cortical controllers to query the unsupervised world model to obtain action plans with high expected rewards. To do so, the brain utilizes a learnt reward model based on associating cortical latent states with previously experienced primary rewards fed through to the VTA. As happened previously, if this reward model misgeneralizes so as to assign reward to a state of other's pain or pleasure, as opposed to our own, then the brain should naturally develop this kind of pro-social motivation, in exactly the same way it develops motivation to reduce its own pain and increase its own pleasure based on the same reward model.

Finally, we come to neural resonance. I would argue this is the physiological oldest and most basic state of empathy and occurs due to a very similar mechanism of model misgeneralization. Only this time, it is not the classic RL-based reward model in VTA that is mis-generalizing, but a separate reflex-association model implemented primarily in the amygdala and related circuitry such as the stria terminalis and periacqueductal grey that is misgeneralizing. In my previous post, I argue that there are two separate behavioural systems in the brain. One reward-based based on RL, and one which predicts brainstem reflexes and other visceral sensations based on supervised predictive learning -- i.e. associate a current state with a future visceral sensation. While the prosocial motivation system is fundamentally based on the RL system, the neural resonance empathy arises from this brainstem-prediction circuit. That is, we have a circuit that is constantly parsing cortical latent states for information predictive of experiencing pain, or needing to flinch, or needing to fight or flee, or any other visceral sensation or decision controlled by the brainstem. When this circuit misgeneralizes, it takes a cortical latent representation of another agent experiencing pain, sees that it contains many 'pain-like' features, and then predicts that the agent itself will experience pain shortly, and thus drives the visceral sensation and compensatory reflexes. This, we argue, is the root of neural resonance.

Widespread empathy in animals

Our theory argues that empathy is a fundamental and basic result of the mammalian cognitive architecture, and hence a clear prediction that results is that essentially all mammals should show some degree of empathy -- primarily in terms of neural resonance and prosocial behaviour. Although the evidence on this is not 100% conclusive for all mammals, almost every animal species studied appears to show at least some degree of empathy towards conspecifics. A large amount of work has been done investigating this in mice and rats. Studies have found that rats which see another rat being given painful electric shocks become more sensitive to pain themselves -- evidence of neural resonance. Similarly it has been shown that rats will not pull a lever which gives them food (a positive reward) if it leads to another rat being given a painful shock. Other species for which there is much evidence of empathy include elephants, dolphins and, of course, apes and monkeys.

Outside of apes and monkeys, dophins and elephants, as well as corvids also appear in anecdotal reports and the scientific literature to have many complex forms of empathy. For instance, both dolphins and elephants appear to take care of and nurse sick or injured individuals, even non-kin, as well as grieve for dead conspecifics. They also appear to be able to use mentalizing behaviours to anticipate the needs of their conspecifics -- for instance bringing them food or supporting them if injured. Apes (as well as corvids and human children) also show all of these empathic behaviours, and also are known to perform 'consolation behaviours' where bystanders will go up to and comfort distressed fellows, especially the loser of a dominance fight. This behaviour can be shown to occur both in captivity, and in the wild.

Contextual modulation of empathy

Overall, while we argue that the basic fundamental forms of empathy effectively arise due to misgeneralization of learnt reward models, and are thus a fundamental feature of this kind of cognitive architecture, this does not mean that our empathic responses are not also sculpted by evolution. Many of our emotions and behaviours take this basic level of empathy and elaborate on it in various ways. Much of our social behaviour and reciprocal altruism is based on a foundation of empathy. Moreover, evolutionarily hardwired behaviours like parenting and social bonding is likely deeply intertwined with our proto-empathic ability. An example of this is the hormone oxytocin which is known to boost our empathic response, as well as being deeply involved both in parental care and social bonding.

On the other hand, evolution may also have given us mechanisms that suppress our natural empathic response rather than accentuate it. Humans, as well as other animals, are easily able to override or inhibit their sense of empathy when it comes to outgroups or rivals of various kinds, exactly as predicted by evolutionary theory. This is also why normal empathetic people are reliably able to sanction or inflict terrible cruelties on others. All of humans, chimpanzees, and rodents are able to modulate their empathic response to be greater for those they are socially close with and less for defectors or rivals (and often becoming negative into schadenfreude). Similarly, an MEG study in humans studying adolescents who grew up in an intractable conflict (the Israel-Palestine conflict), found that both Israeli-Jews and Palestinian-Arabs had an initial spike of empathy towards both ingroup and outgroup stimulus. However, this was followed by a top-down inhibition of empathy towards the outgroup and an increase of empathy towards the in-group. This top-down inhibition of empathy must be cortically-based and learnt-from scratch based on contextual factors (since evolution cannot know a-priori who is ingroup and outgroup). The fact that top-down inhibition of the 'natural' empathy can be learnt is probably also why, empirically, research on genocides have typically found that any kind of mass murder requires a long period of dehumanization of the enemy, to suppress people's natural empathic response to them.

All of this suggests that while the fundamental proto-empathy generated by the reward model generalization is automatic, the response can also be shaped by top-down cortical context. From a machine learning perspective, this means that humans must have some kind of cortical learnt meta-reward model which can edit the reward predictions flexibly based on information and associations coming from the world-model itself.

Psychopaths etc

Another interesting question for this theory is how and why psychopaths, or other empathic disorders exist. If empathy is such a fundamental phenomenon, how do we appear to get impaired empathy in various disorders? Our response to this is that the classic cultural depiction of a psychopath as someone otherwise normal (and often highly functioning) but just lacking in empathy is not really correct. In fact, psychopaths do show other deficits, typically in emotional control, disinhibited behaviour, blunted affect (not really feeling any emotions) and often pathological risk-taking. Neurologically, psychopathy is typically associated with a hypoactive and/or abnormal amygdala, among other deficits, including often also impaired VTA connectivity leading to deficits in decision-making and learning from reinforcement (especially punishments). According to our theory, this would argue that psychopathy is not really a syndrome of lacking empathy, but instead in having abnormal and poor learnt reward models mapping between base reward and visceral reflexes and cortical latent states. Abnormal empathy is then a consequence of the abnormal reward model and its (lack of) generalization ability.

Our theory is very similar to the Perception-Action-Mechanism (PAM), and the very similar 'simulation theory' of empathy. Both argue that empathy occurs because our brain essentially learns to map representations of other's experiencing some state to our own representations for that state. Our contribution is essentially to argue that this isn't some kind of special ability that must be evolved, but rather a natural outcome an an architecture which learns a reward model against an unsupervised latent state.

One prediction of this hypothesis would be that we should expect general unsupervised models, potentially attached to RL agents, to naturally develop all kinds of 'mirror neurons' if trained in a multi-agent environment.

Latest Articles

Dec 2, 2024

Conjecture: A Roadmap for Cognitive Software and A Humanist Future of AI

Conjecture: A Roadmap for Cognitive Software and A Humanist Future of AI

An overview of Conjecture's approach to "Cognitive Software," and our build path towards a good future.

Feb 24, 2024

Christiano (ARC) and GA (Conjecture) Discuss Alignment Cruxes

Christiano (ARC) and GA (Conjecture) Discuss Alignment Cruxes

The following are the summary and transcript of a discussion between Paul Christiano (ARC) and Gabriel Alfour, hereafter GA (Conjecture), which took place on December 11, 2022 on Slack. It was held as part of a series of discussions between Conjecture and people from other organizations in the AGI and alignment field. See our retrospective on the Discussions for more information about the project and the format.

Feb 15, 2024

Conjecture: 2 Years

Conjecture: 2 Years

It has been 2 years since a group of hackers and idealists from across the globe gathered into a tiny, oxygen-deprived coworking space in downtown London with one goal in mind: Make the future go well, for everybody. And so, Conjecture was born.

Sign up to receive our newsletter and

updates on products and services.

Sign up to receive our newsletter and updates on products and services.

Sign up to receive our newsletter and updates on products and services.